Model-predictive control

Computing a globally optimal control policy is generally intractable, especially when hard constraints need to be satisfied. Model-predictive control (MPC) circumvents the issue by instead re-computing at every control cycle an optimal control “trajectory”, i.e. a sequence of optimal actions to be taken from the current state of the system. Hence, the controller needs to solve an optimization problem at each control cycle to find a command that optimizes the future behavior of the robot. This approach enables the creation of very versatile and reactive behaviors. A simple change in the cost function defining the goal of the controller leads to a different behavior without the need for a new algorithm. We are also guaranteed that optimal actions are taken at every instant of time while ensuring constraint satisfaction.

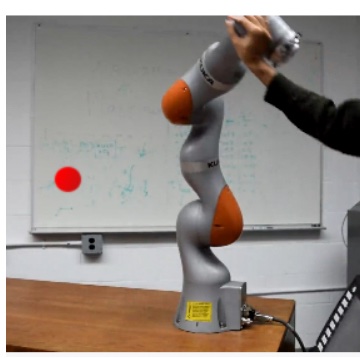

However, the use of MPC on real, complex robots, lead to important challenges, as the dynamics of complex robots is typically nonlinear and often non-smooth, which leads to non-convex optimization problems which need to be solved at a very high frequency. In this research focus, we tackle these issues to create generic algorithms for MPC that can be used on any types of robots. In particular:

- We develop generic MPC algorithms that can be used with any types of robots

- We design efficient solvers that exploit the structure of robotics problems and modern optimization techniques

- We use machine learning to speed-up optimization

- We demonstrate our algorithms on complex tasks with legged robots and manipulators

Videos

Selected publications

-

A.

Meduri,

P.

Shah,

J.

Viereck,

M.

Khadiv,

I.

Havoutis,

L.

Righetti,

"BiConMP: A Nonlinear Model Predictive Control Framework for Whole Body Motion Planning,"

IEEE Transactions on Robotics,

pp. 1–18,

2023.

-

A.

Meduri,

H.

Zhu,

A.

Jordana,

L.

Righetti,

"MPC with Sensor-Based Online Cost Adaptation,"

in 2023 IEEE-RAS International Conference on Robotics and Automation (ICRA),

May,

2023.

-

S.

Kleff,

E.

Dantec,

G.

Saurel,

N.

Mansard,

L.

Righetti,

"Introducing Force Feedback in Model Predictive Control,"

in 2022 IEEE/RSJ International Conference on Intelligent Robots and Systems,

Oct,

2022.

-

S.

Kleff,

N.

Mansard,

L.

Righetti,

"On the Derivation of the Contact Dynamics in Arbitrary Frames (Application to Polishing with Talos),"

in IEEE-RAS International Conference on Humanoid Robots,

2022.

-

J.

Viereck,

A.

Meduri,

L.

Righetti,

"ValueNetQP: Learned one-step optimal control for legged locomotion,"

in Proceedings of The 4th Annual Learning for Dynamics and Control Conference,

pp. 931–942,

Jun,

2022.

-

E.

Daneshmand,

M.

Khadiv,

F.

Grimminger,

L.

Righetti,

"Variable Horizon MPC with Swing Foot Dynamics for Bipedal Walking Control,"

IEEE Robotics and Automation Letters,

vol. 6,

no. 2,

pp. 2349–2356,

Apr,

2021.

-

S.

Kleff,

A.

Meduri,

R.

Budhiraja,

N.

Mansard,

L.

Righetti,

"High-Frequency Nonlinear Model Predictive Control of a Manipulator,"

in 2021 IEEE-RAS International Conference on Robotics and Automation (ICRA),

May,

2021.